How does TidesDB work?

If you want to download the source of this document, you can find it here.

Introduction

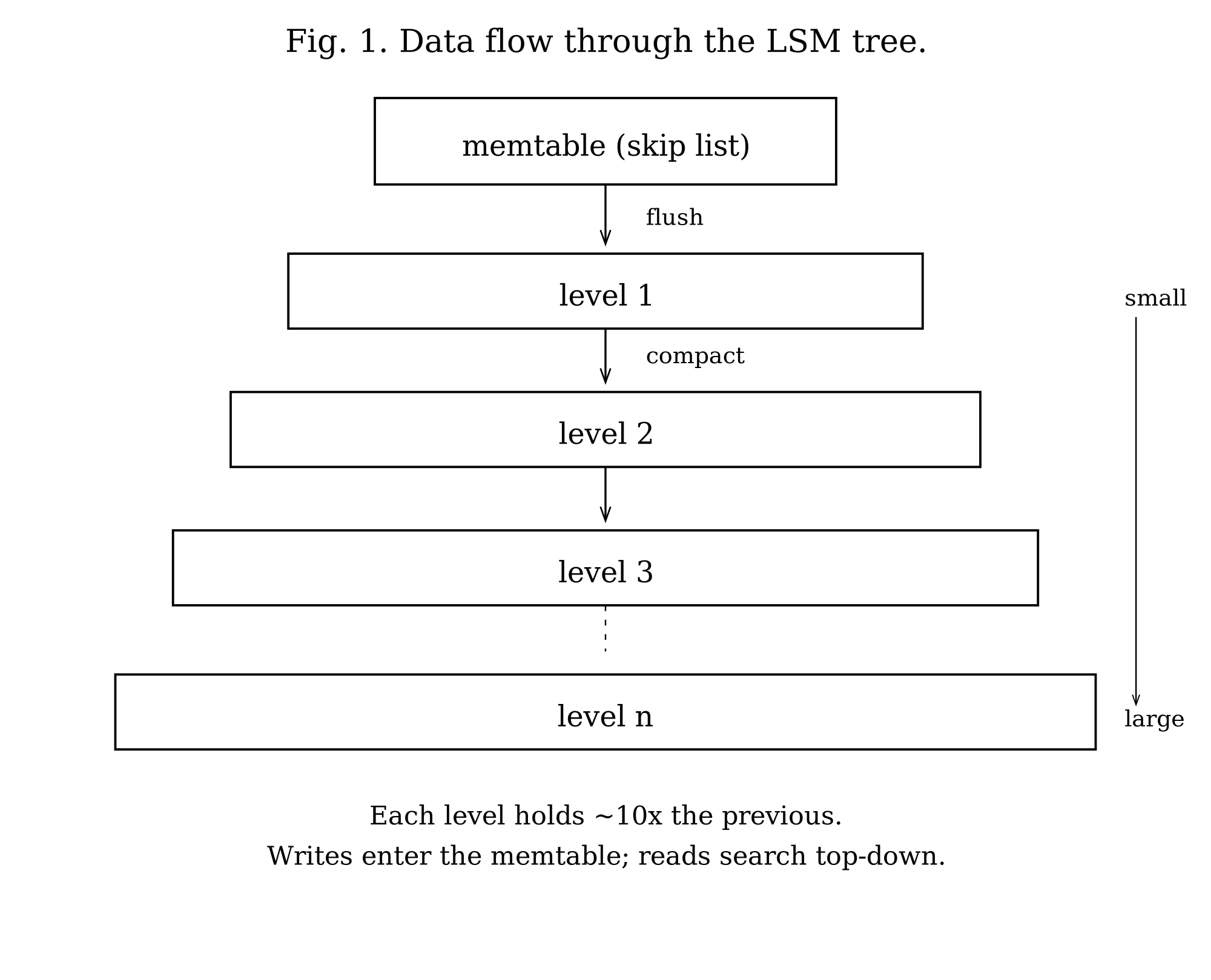

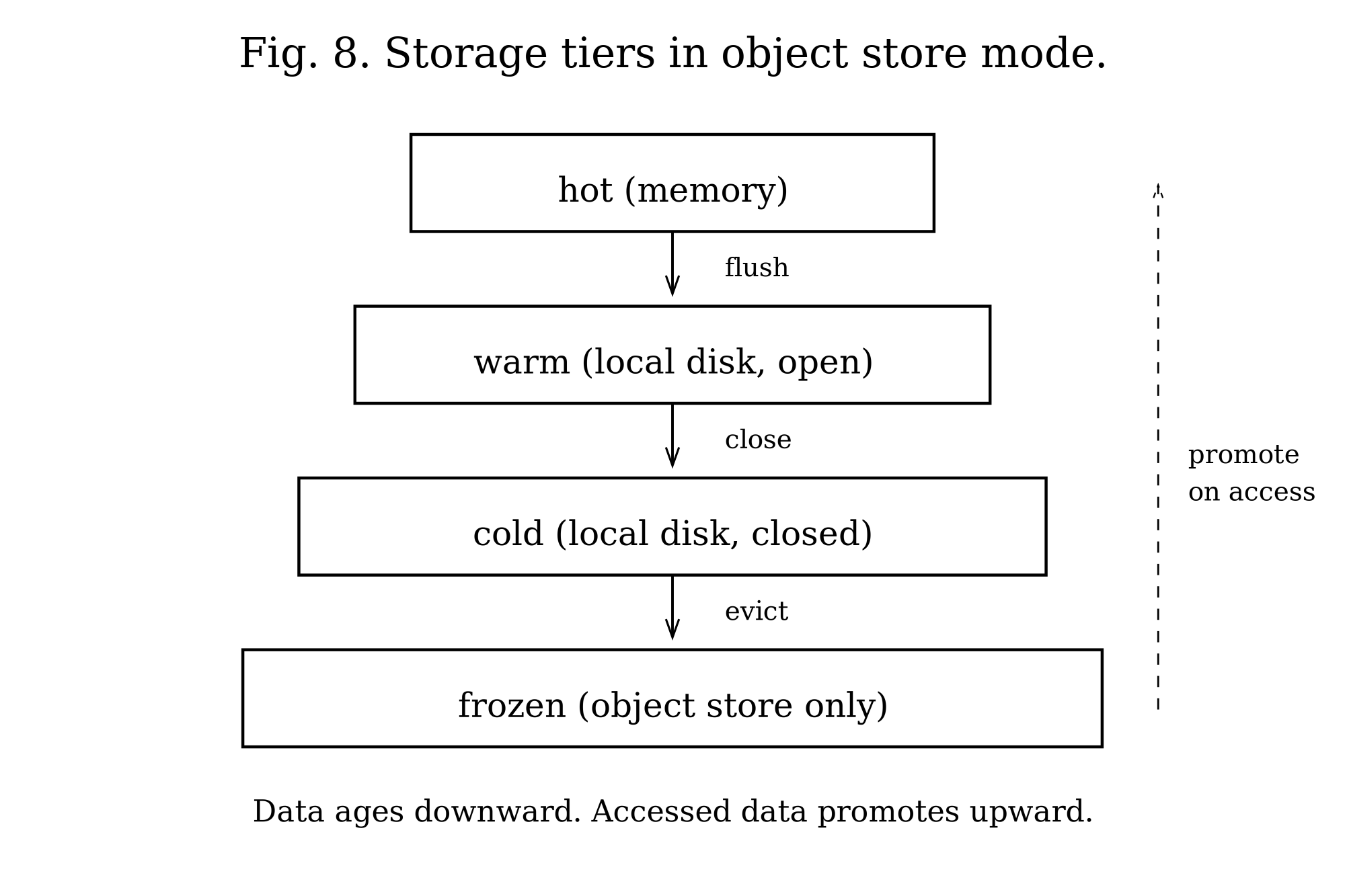

TidesDB is an embeddable key-value storage engine built on log-structured merge trees. The LSM tree is an old and well-understood idea: batch writes in memory, then flush sorted runs to disk. The cost of this approach is write amplification, since data must be written multiple times during compaction. The benefit is improved write throughput and sequential I/O patterns. The fundamental bargain is that writes become fast while reads must search multiple sorted files.

The system provides ACID transactions with five isolation levels and manages data through a hierarchy of sorted string tables (SSTables). Each level in the hierarchy holds roughly N times more data than the previous level. Compaction merges SSTables from adjacent levels, discarding obsolete entries and reclaiming space.

Data flows from memory to disk in stages (Figure 1). Writes first enter an in-memory skip list, chosen over AVL trees for its easier lock-free potential and simpler implementation. A write-ahead log backs each skip list. When the skip list exceeds the configured write buffer size, it becomes immutable and a background worker flushes it to disk as an SSTable. These tables accumulate in levels. Compaction merges tables from adjacent levels to maintain the level size invariant.

Data Model

Column Families

The database organizes data into column families. Each column family is an independent key-value namespace with its own configuration, memtables, write-ahead logs, and disk levels. This isolation allows different column families to use different compression algorithms, comparators, and tuning parameters within the same database instance.

In the default per-column-family mode, a column family maintains one active memtable for new writes, a queue of immutable memtables awaiting flush, a write-ahead log paired with each memtable, up to 32 levels of sorted string tables on disk, and a manifest file tracking which SSTables belong to which levels.

TidesDB also supports a unified memtable mode where all column families share a single skip list and single WAL. The Unified Memtable section covers this alternative in detail.

Sorted String Tables

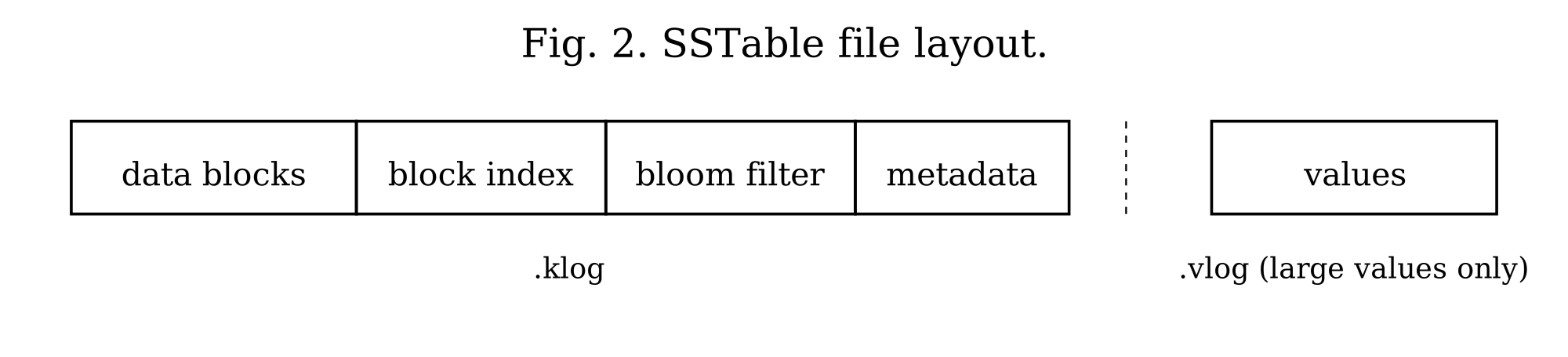

Each sorted string table consists of two files: a key log (.klog) and a value log (.vlog). The key log stores keys, metadata, and values smaller than a configurable threshold (512 bytes by default). Values meeting or exceeding this threshold reside in the value log, with the key log storing only the file offset. This separation keeps the key log compact for efficient scanning while accommodating arbitrarily large values.

The key log uses a block-based format. Each block, fixed at 64KB, contains multiple entries serialized with variable-length integer encoding. Blocks compress independently using LZ4, LZ4-FAST, Zstd, or Snappy. The key log ends with three auxiliary structures: a block index for binary search, a bloom filter for negative lookups, and a metadata block with SSTable statistics. The on-disk layout of these components is shown in Figure 2.

B+tree Format (Optional)

Column families can optionally use a B+tree structure for the key log instead of the default block-based format. This is enabled with use_btree=1 in the column family configuration. The B+tree stores all key-value entries exclusively in leaf nodes, with internal nodes containing only separator keys and child pointers for navigation. Leaf nodes are doubly-linked via prev_offset and next_offset pointers, enabling O(1) bidirectional traversal. The tree is immutable after construction: it is bulk-loaded from sorted memtable data during flush and never modified afterward.

During construction, the builder accumulates entries in a pending leaf until it reaches the target node size (64KB by default). When full, the leaf is serialized and written to disk. After all leaves are written, internal nodes are built level-by-level from separator keys extracted from each child’s first key. A backpatching pass then updates each leaf’s prev_offset and next_offset pointers to their final values. For compressed nodes, the leaf links are stored in a header before the compressed data, allowing the backpatch to update them without decompressing and recompressing the entire node.

Point lookups traverse from root to leaf using binary search at each internal node to select the correct child, then binary search within the leaf to locate the key. This yields O(log N) complexity where N is the number of nodes, compared to potentially scanning multiple 64KB blocks in the block-based format. Range scans use cursors that hold a reference to the current leaf node, advancing through entries before following the next_offset link to load the next leaf. Backward iteration follows prev_offset links similarly.

Because a key can have more than one retained version in the same leaf (a snapshot reader is holding an older version alive past the point a newer one was written), the leaf scan is not free to stop at the first match. The point lookup primitive is btree_get_at_seq: it lower-bounds to the key, then scans the contiguous run of leaf entries that share it, returning the entry with the highest sequence number at or below a caller-supplied ceiling. btree_get is preserved as a convenience wrapper that passes UINT64_MAX for the ceiling, returning the newest version. The bulk builder cooperates by keeping a key’s entire version run inside a single leaf, so the scan never crosses a leaf boundary. The ceiling is threaded through tidesdb_sstable_get_btree from the caller’s snapshot: a Repeatable Read or Snapshot transaction passes its snapshot sequence and sees the version it was supposed to see, while conflict detection passes UINT64_MAX so it sees the newest committed version regardless of what readers are holding. This makes a put-then-delete on the same key return the delete to fresh readers and still return the put to a snapshot that began before the delete committed, without race-dependent ordering between leaves.

The B+tree format excels at point lookups and range scans with seeks. Point lookups benefit from O(log N) tree traversal versus potentially scanning multiple 64KB blocks. Range scans with seek() navigate directly to the target key position rather than scanning sequentially. Workloads with many small-to-medium SSTables see the most improvement, as each SSTable’s hot nodes remain cached independently. The block-based format remains preferable for sequential full-table scans and write-heavy workloads where B+tree metadata overhead during flush is less desirable.

When block_cache_size is configured, TidesDB creates a dedicated clock cache for B+tree nodes. Frequently accessed nodes remain in memory as fully deserialized structures, avoiding repeated disk reads and deserialization overhead. Cache keys combine the SSTable ID and node offset to ensure uniqueness across tables. On eviction, the callback frees the node’s memory via arena destruction. Cached nodes use arena allocation, where all node memory (keys, values, metadata) is allocated from a single arena, enabling O(1) bulk deallocation when the node is evicted. When an SSTable is closed or deleted, all its cached nodes are invalidated by prefix scan to prevent stale references.

Large values meeting or exceeding the configured threshold (512 bytes by default) are written to the value log, with the leaf entry storing only the vlog offset. Nodes compress independently using LZ4, LZ4-FAST, Zstd, or Snappy. When bloom filters are enabled, they are checked before tree traversal. A negative result skips the B+tree lookup entirely, which is critical for LSM-trees where most SSTables will not contain the requested key. SSTable metadata persists five additional fields for B+tree format: root offset, first leaf offset, last leaf offset, node count, and tree height. These are restored when the SSTable is reopened.

Serialization Optimizations

The B+tree uses several techniques to minimize serialized node size.

Varint encoding is used for metadata fields such as entry counts, key sizes, value sizes, and vlog offsets. These use LEB128-style variable-length integers. Small values under 128 require only one byte; the full 64-bit range needs at most ten bytes. This typically saves 50 to 70 percent on metadata overhead compared to fixed-width integers.

Prefix compression takes advantage of the fact that keys within a leaf node often share common prefixes with their predecessors. Each key stores only its suffix, with the prefix length encoded as a varint. During deserialization, keys are reconstructed by copying the prefix from the previous key. For sorted string keys with common prefixes (such as “user:1001” and “user:1002”), this achieves 60 to 80 percent key size reduction.

A key indirection table in each leaf node contains 2-byte offsets pointing to each key’s position within the node. This enables O(1) random access to any key during binary search without scanning through variable-length prefix-compressed keys sequentially.

Delta sequence encoding stores sequence numbers within a leaf as signed deltas from a base sequence number (the minimum in the node). Since entries in a leaf typically have similar sequence numbers, deltas are small and compress well with varint encoding. TTL values use zigzag encoding for efficient signed integer representation.

Internal nodes store child offsets as sequential signed deltas. Each offset is encoded as the difference from the previous child’s offset, starting from a base offset (the first child). Since child nodes are typically written sequentially, deltas are small positive values that compress efficiently.

Leaf node format

[type:1][num_entries:varint][prev_offset:8][next_offset:8][key_offsets_table: num_entries × 2 bytes][base_seq:varint][entries: prefix_len:varint, suffix_len:varint, value_size:varint, vlog_offset:varint, seq_delta:signed_varint, ttl:signed_varint, flags:1][keys: prefix-compressed suffixes][values: inline values only]Internal node format

[type:1][num_keys:varint][base_offset:8][child_offset_deltas: signed_varint × (num_keys + 1)][key_sizes: varint × num_keys][separator_keys: raw key bytes]File Format

Each klog entry uses this format:

flags (1 byte)key_size (varint)value_size (varint)seq (varint)ttl (8 bytes, if HAS_TTL flag set)vlog_offset (varint, if HAS_VLOG flag set)key (key_size bytes)value (value_size bytes, if inline)The flags byte encodes tombstones (0x01), TTL presence (0x02), value log indirection (0x04), and delta sequence encoding (0x08). Variable-length integers save space: a value under 128 requires one byte, while the full 64-bit range needs at most ten bytes.

In per-column-family mode, write-ahead logs use the same format. Each memtable has its own WAL file, named by the SSTable ID it will become. Recovery reads these files in sequence order, deserializes entries into skip lists, and enqueues them for asynchronous flushing. In unified memtable mode, per-CF WAL files are not created since all writes go through the unified WAL. This avoids wasted I/O, file descriptors, and empty WAL file artifacts in column family directories.

When unified memtable mode is enabled, all column families share a single WAL per memtable generation. The unified WAL uses a different batch format: a 2-byte magic prefix (0x55AA) followed by entries, each prefixed with a 4-byte big-endian column family index before the standard flags, varints, and key-value data. During recovery, the system detects unified WAL files by their filename prefix (uwal_) and magic bytes, deserializes entries back into the unified skip list with their CF index prefixes intact, and flushes them through the standard unified flush path.

Transactions

Isolation Levels

The system provides five isolation levels.

Read Uncommitted sees all versions, including uncommitted ones. The snapshot sequence is set to UINT64_MAX.

Read Committed performs no validation. Each read refreshes its snapshot to see the most recently committed version.

Repeatable Read detects if any read key changed between read and commit time. The transaction tracks each key it reads along with the sequence number of the version it saw. At commit, it checks whether a newer version exists.

Snapshot Isolation uses first-committer-wins semantics with write-write conflict detection only. No read set is tracked. If another transaction committed a write to the same key after this transaction’s snapshot time, the commit aborts. Write skew anomalies, where two transactions read overlapping data and write disjoint sets, are explicitly allowed. This matches standard database theory where snapshot isolation requires only write-write conflict detection.

Serializable implements serializable snapshot isolation (SSI). In addition to the write-write conflict detection from snapshot isolation, the system tracks read-write conflicts. Each transaction maintains a read set consisting of arrays of CF pointers, keys, key sizes, and sequence numbers. Only Repeatable Read and Serializable allocate read sets. The system creates a hash table (tidesdb_read_set_hash_t) using xxHash for O(1) conflict detection when the read set exceeds TDB_TXN_READ_HASH_THRESHOLD (64 reads). At commit, it checks all concurrent transactions: if transaction T reads key K that another transaction T’ writes, it sets T.has_rw_conflict_out = 1 and T'.has_rw_conflict_in = 1. If both flags are set, meaning the transaction is a pivot in a dangerous structure, the commit aborts.

This is a simplified SSI. It detects pivot transactions but does not maintain a full precedence graph or perform cycle detection. False aborts are possible when non-pivot transactions have both flags set.

Multi-Version Concurrency Control

Each transaction receives a snapshot sequence number at begin time. For Read Uncommitted, this is UINT64_MAX (sees all versions). For Read Committed, it refreshes on each read. For Repeatable Read, Snapshot, and Serializable, the snapshot is global_seq - 1, capturing all transactions committed before this one started.

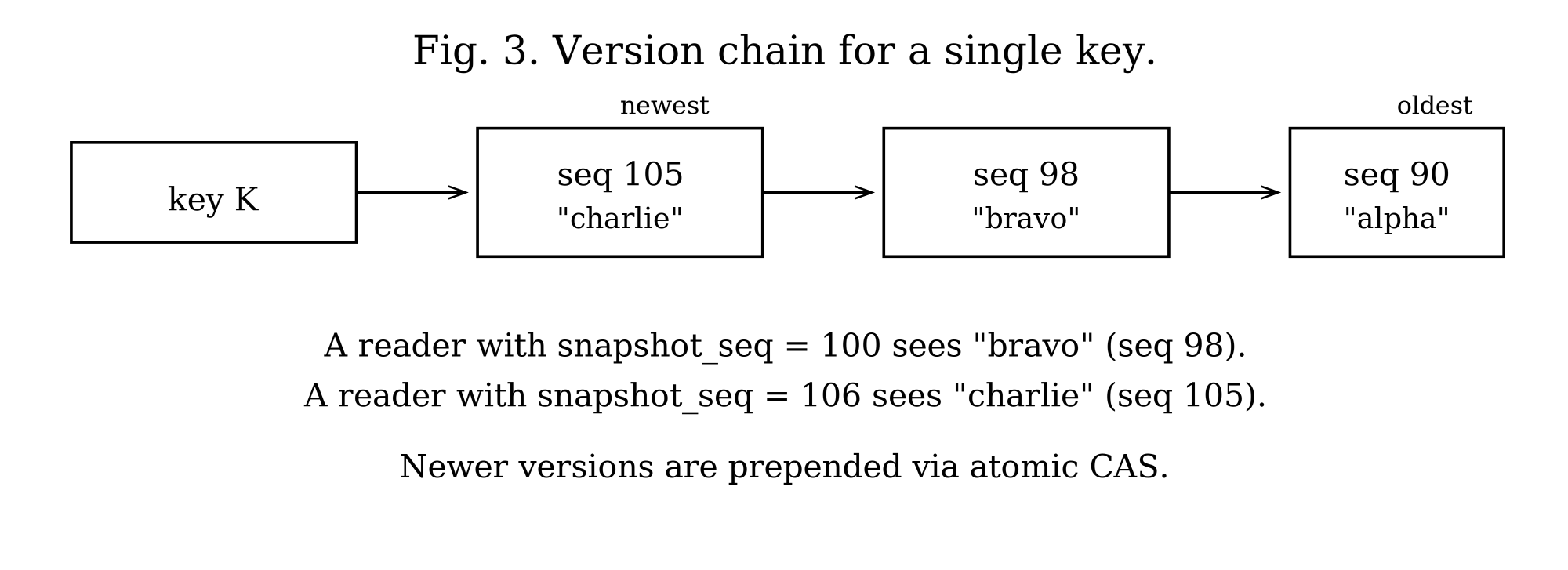

The snapshot sequence determines which versions the transaction sees. It reads the most recent version with sequence number less than or equal to its snapshot sequence. Each key maintains a chain of versions, newest first, as illustrated in Figure 3.

At commit time, the system assigns a commit sequence number from a global atomic counter. It writes operations to the write-ahead log, applies them to the active memtable with the commit sequence, and marks the sequence as committed in a fixed-size circular buffer (defined by TDB_COMMIT_STATUS_BUFFER_SIZE, currently 65536 entries). The buffer wraps around: sequence N maps to slot N % 65536. When the buffer wraps, old entries are overwritten, so visibility checks for very old sequences may return incorrect results. In practice, this is acceptable because transactions with sequence numbers more than 65536 behind the current sequence are extremely rare. Readers skip versions whose sequence numbers are not yet marked committed.

Long-running readers are protected by reader-bound version retention. The active transaction list exposes the minimum snapshot sequence held by any live reader via tidesdb_min_active_snapshot_seq. Compaction and flush consult this floor at every emit site and retain superseded versions whose sequence number is at or above it, even when a newer version is also present in the merge input. Without this floor a long-running snapshot transaction could observe a version disappear mid-iteration because a background compaction had decided the newest version was sufficient. The retention floor is recomputed on the fly so readers that finish (commit, rollback, or free) immediately let the next compaction drop the versions they were holding. To keep that floor honest, tidesdb_txn_free removes the transaction from the active list as part of its teardown. A caller that frees a transaction without first committing or rolling back can no longer leave a dangling snapshot pointer that compaction would otherwise dereference.

Iterators participate in the same scheme. When the iterator is created it captures the active memtable, the per-CF immutables, and (when unified mode is enabled) every entry in unified_mt.immutables as merge sources. The unified immutables in particular are essential: a flush worker that rotated the unified memtable but has not yet demuxed the immutable into per-CF SSTables would otherwise leave the iterator with neither the in-memory nor the on-disk copy of those entries. With the immutable queue snapshotted into the iterator’s source list, the iterator sees every committed write visible to its snapshot sequence regardless of where on the flush pipeline that write currently lives.

Multi-Column Family Transactions

TidesDB achieves multi-column-family transactions through a design where the transaction structure maintains an array of all involved column families. When you commit, it assigns operations across all these column families the same sequence number from a global atomic counter shared throughout the database. This shared sequence number serves as a lightweight coordination mechanism that ensures atomicity without the overhead of traditional two-phase commit protocols. Each column family’s write-ahead log records its operations with this same sequence number, effectively synchronizing the commit across all involved column families in a single atomic step.

Write Path

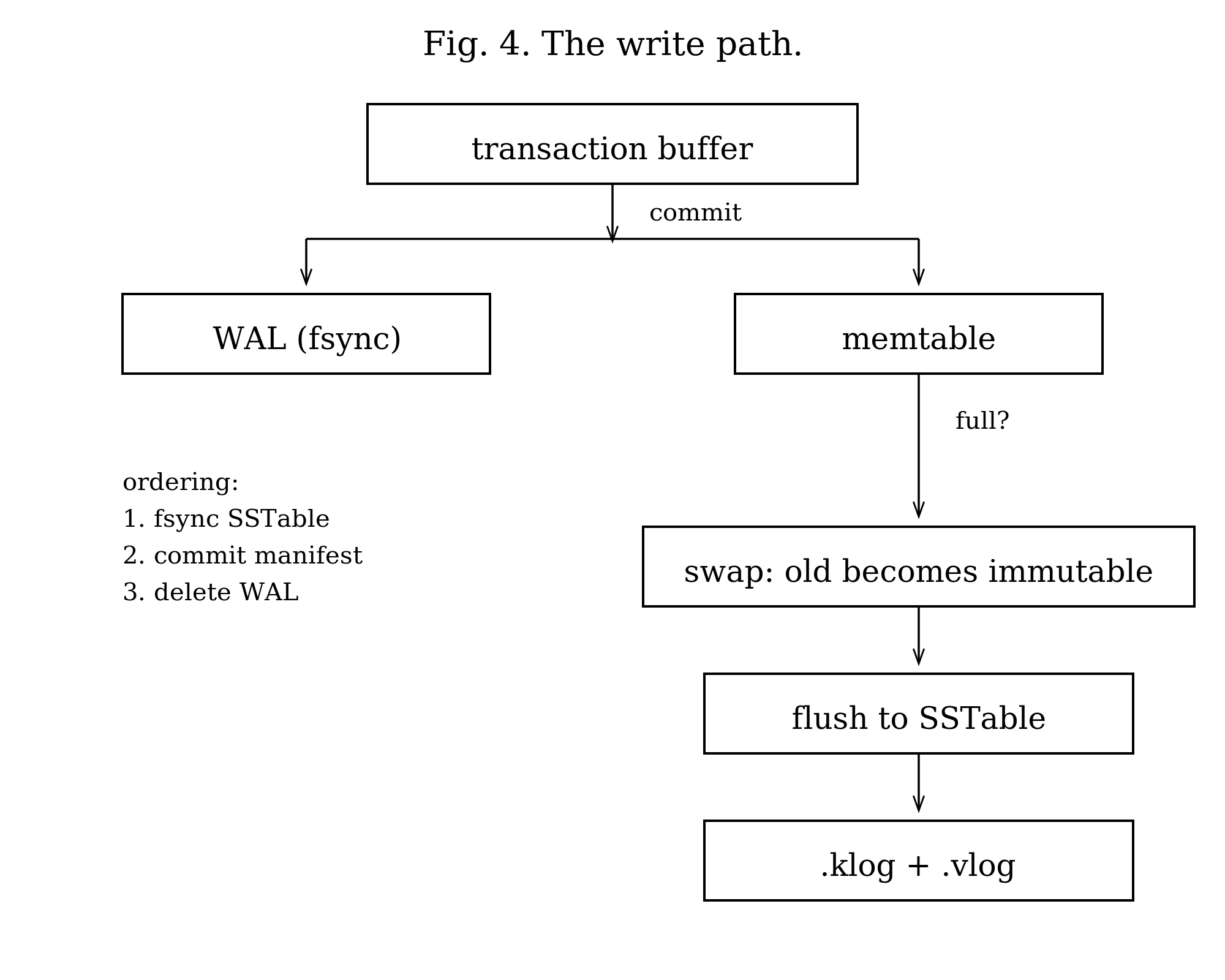

The path a write takes from the application to durable storage is shown in Figure 4.

Transaction Commit

A transaction buffers operations in memory until commit. At commit time, the system validates according to the isolation level, assigns a commit sequence number from the global counter, serializes operations to each column family’s write-ahead log, applies operations to the active memtable with the commit sequence, marks the commit sequence as committed in the status buffer, and checks if any memtable exceeds its adaptive flush threshold.

For Snapshot Isolation and higher, commit-time validation checks each key in the write set against the active memtable, unified memtable (if enabled), immutable memtables, and SSTables across all levels. Two optimizations reduce the cost of this check. First, SSTables whose maximum sequence number predates the transaction’s snapshot are skipped entirely without acquiring a reference, checking the bloom filter, or reading any blocks, since no entry in them can conflict. In a typical workload the vast majority of SSTables predate any active transaction, so this eliminates most of the I/O from conflict detection. Second, when an SSTable does need to be probed, the search uses a seq-only mode that finds the key through the bloom filter, block index, and block search path but returns only the sequence number without allocating a key-value pair, copying the value, or reading from the value log.

The transaction uses hash-based deduplication to apply only the final operation for each key. The hash table is created lazily when the transaction exceeds TDB_TXN_DEDUP_SKIP_THRESHOLD (8 operations) and is sized at TDB_TXN_DEDUP_HASH_MULTIPLIER (2x) the number of operations with a minimum of TDB_TXN_DEDUP_MIN_HASH_SIZE (64 slots). This is a fast non-cryptographic hash. Collisions are possible but rare, and would cause the transaction to write both operations to the memtable (the skip list handles duplicates correctly). This optimization reduces memtable size when a transaction modifies the same key multiple times.

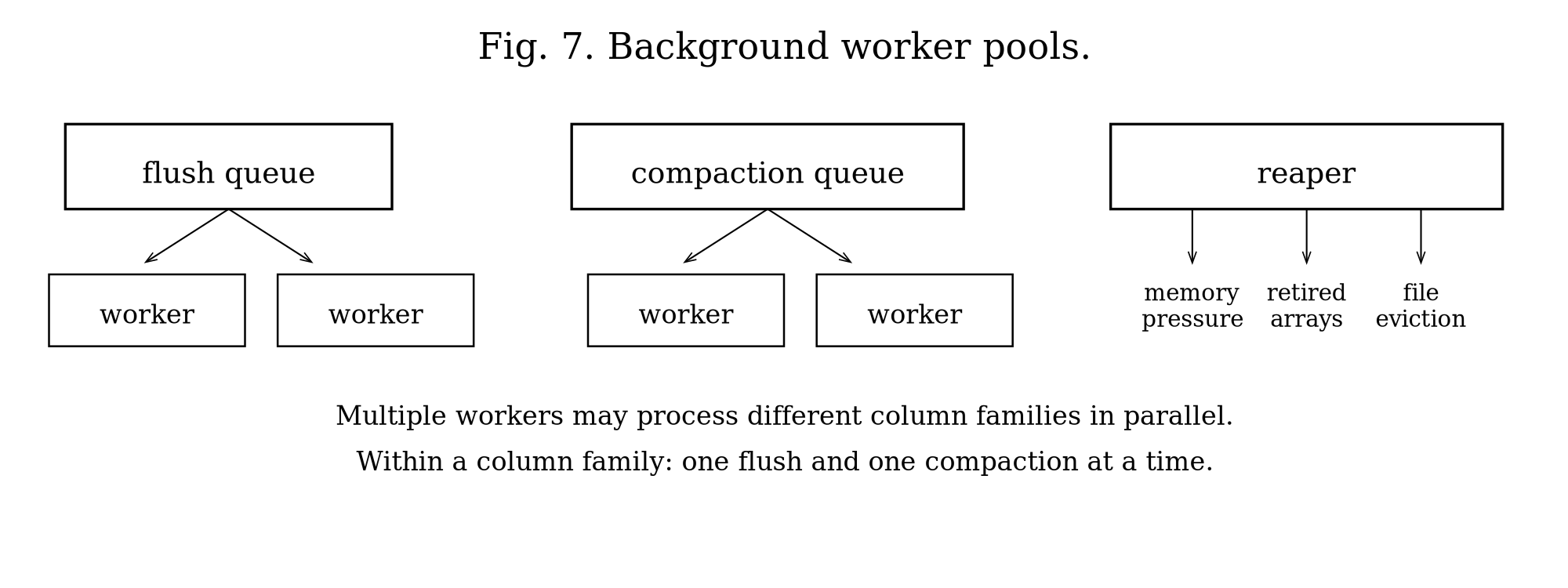

Memtable Flush

When a memtable exceeds the flush threshold, the system atomically swaps in a new empty memtable and enqueues the old one for flushing. The swap takes one atomic store with a memory fence for visibility.

Adaptive Flush Threshold

The flush threshold is not a fixed value. At transaction commit, the system adjusts the threshold based on L0 immutable queue pressure to balance write batching against memory pressure. When the L0 queue is empty (idle), the threshold is 150% of write_buffer_size, providing 50% headroom and allowing the memtable to accumulate more data before flushing for better batching. When one or more immutables are pending but below half the stall threshold (moderate pressure), the threshold drops to 125% of write_buffer_size, providing 25% headroom. When the L0 queue depth reaches 50% or more of l0_queue_stall_threshold (high pressure), the threshold equals write_buffer_size exactly, with zero headroom, triggering an immediate flush. This adaptive mechanism reduces flush frequency during idle periods, improving write throughput, while ensuring rapid flushing under pressure to prevent memory buildup. With the default 64MB write buffer, the effective threshold ranges from 64MB under pressure to 96MB when idle.

A flush worker dequeues the immutable memtable and creates an SSTable. It iterates the skip list in sorted order, writing entries to 64KB blocks. Values meeting or exceeding the threshold (512 bytes by default) go to the value log; the key log stores only the file offset. The worker compresses each block optionally, writes the block index and bloom filter, and appends metadata. It then fsyncs both files, adds the SSTable to level 1, commits to the manifest, and deletes the write-ahead log.

The ordering of these steps is critical. Fsync before manifest commit ensures the SSTable is durable before it becomes discoverable. Manifest commit before WAL deletion ensures crash recovery can find the data.

Crash Scenarios

If the system crashes after fsync but before manifest commit, the SSTable exists on disk but is not discoverable. Recovery detects it is not in the manifest and deletes it at startup. If it crashes after manifest commit but before WAL deletion, recovery finds both the SSTable and the WAL. It flushes the WAL again, creating a duplicate SSTable. The manifest deduplicates by SSTable ID.

Validation Modes

WAL files use permissive validation (block_manager_validate_last_block(bm, 0)). If the last block has invalid footer magic or incomplete data, the system truncates the file to the last valid block by walking backward through the file. This handles crashes during WAL writes. If no valid blocks exist, it truncates to the header only.

SSTables, by contrast, use strict validation (block_manager_validate_last_block(bm, 1)). Any corruption in the last block causes the SSTable to be rejected entirely. This reflects the different nature of these files: SSTables are permanent and must be correct.

Unified Memtable

By default, each column family maintains its own skip list and WAL. This means a transaction touching N column families performs N WAL writes, one per column family. For workloads that create many column families per logical entity (for example, a MariaDB plugin where each table has one data CF plus N secondary index CFs), this multiplies WAL I/O linearly with the number of column families involved in each transaction.

Unified memtable mode replaces all per-CF skip lists and WALs with a single shared skip list and a single WAL at the database level. All column families write into the same memtable and the same WAL file, reducing N WAL writes per transaction to exactly one regardless of how many column families are involved.

Key Isolation

Column families share the same skip list but must not see each other’s keys. The system assigns each column family a unique 4-byte index (unified_cf_index) at creation time, allocated from an atomic counter (next_cf_index) and persisted to disk (see CF Index Persistence below). Keys in the unified skip list are prefixed with this 4-byte big-endian index before the user key. The prefix ensures keys from different column families sort into contiguous groups (all keys for CF 0 come before CF 1, and so on) and that lookups and iterations only see keys belonging to the target column family. All column families sharing a unified memtable must use the same comparator (enforced at creation), because the shared skip list has a single sort order. The unified skip list itself always uses memcmp as its comparator, which correctly orders the 4-byte big-endian prefix numerically and then sorts the suffixed user keys in byte order.

CF Index Persistence

The 4-byte CF index lives at the head of every key in the unified WAL and every key in the shared skip list. If the index drifts between runs the entire unified WAL replays under the wrong CFs, which is a silent data-loss bug rather than a crash. To make the index survive crashes and cold starts, every column family’s name-to-index mapping is persisted in a UNIMAP file at the database root and rewritten atomically (UNIMAP.tmp plus rename plus tdb_sync_directory) whenever the map changes. UNIMAP is keyed on column family name because name is the only identity that survives a crash: directory ordering, manifest ordering, and atomic counters all reset, but the name written into config.ini does not.

The map is loaded before WAL replay during recovery (tidesdb_unimap_load), so column families registered at create-time receive the same unified_cf_index they had before the crash, and replayed entries from the unified WAL land in the right skip list groups. Drops and renames keep the map current via tidesdb_unimap_persist, and next_cf_index is advanced past the maximum loaded index so newly created column families never collide with persisted ones. In object-store mode UNIMAP is uploaded alongside config.ini and MANIFEST, so a cold-started replica reconstructs the indexes the primary assigned rather than picking its own.

Write Path in Unified Mode

At transaction commit, the system serializes all operations across all column families into a single unified WAL batch. The batch begins with a 2-byte magic (0x55AA) followed by entries, each prefixed with the 4-byte big-endian column family index. The entire batch is written as a single block to the unified WAL. Operations are then applied to the unified skip list with prefixed keys using tdb_build_prefixed_key(), which prepends the 4-byte CF index to each user key. For a transaction touching 5 column families, this results in 1 WAL write instead of 5.

Read Path in Unified Mode

When reading a key from a specific column family, the system constructs the prefixed key (4-byte CF index + user key) and searches the unified active skip list, then unified immutable memtables (newest to oldest), then falls back to the per-CF SSTable levels. The prefixed key lookup in the skip list is an exact match, so keys from other column families are never visible. Immutable unified memtables that have already been flushed are skipped (via a flushed flag check) to avoid returning stale data that is already durable in SSTables.

Flush Demuxing

When the unified memtable exceeds unified_memtable_write_buffer_size (which defaults to the same value as write_buffer_size, 64MB), it becomes immutable and is enqueued for flushing. Flushing the unified immutable runs in two phases so that per-CF SSTable writes parallelize across the worker pool rather than running sequentially in one worker. Phase one is the demux. The dispatching worker walks the unified immutable’s cursor in sorted order, and since keys are sorted by 4-byte CF index followed by the user key, consecutive entries with the same prefix belong to the same column family. The worker builds one temporary skip list per non-empty CF, populated with stripped keys (CF prefix removed) and using each CF’s own comparator. This phase is unavoidably sequential because the cursor is sequential, but it does only in-memory work.

Phase two is the dispatch. The worker allocates a shared completion barrier and enqueues one per-CF flush task per temporary skip list onto the shared flush queue, then returns. Any worker can pick up a per-CF task. Each task writes its temp skip list to an SSTable in the target CF’s level 1, commits the manifest, triggers a per-CF compaction if thresholds are met, and decrements the barrier. The task that brings the barrier to zero owns the unified WAL cleanup, closes the segment, optionally uploads it to the object store, and marks the unified memtable flushed. With N non-empty column families and M flush workers, the per-CF SSTable writes run roughly N over M sequentially rather than N sequentially. The on-disk format and read path through SSTables remain identical to per-CF mode.

If barrier or work-item allocation fails, the dispatcher falls back to writing the affected splits inline so no data is lost. If a per-CF write fails, the error is recorded on the barrier and logged by the worker, while siblings continue to make progress.

Rotation

The rotation mechanism mirrors per-CF memtable rotation. A CAS-based admission gate (unified_mt.is_flushing) ensures only one thread enters rotation at a time. The rotating thread creates a new skip list and WAL, atomically swaps the active pointer, enqueues the old memtable as an immutable, and submits a flush work item with cf=NULL to signal unified flush dispatch. The flush worker detects cf==NULL and routes to tidesdb_unified_flush_immutable().

Three concurrency invariants make the rotate-and-flush handoff safe. First, tidesdb_flush_memtable takes the unified_mt.is_flushing CAS admission before calling rotate. Two callers that race into the public flush API can no longer both observe the same active memtable, rotate twice, and enqueue the same immutable twice. Second, the write path captures the active pointer, then calls try_ref, then revalidates that unified_mt.active still points at the pinned memtable and retries on the new active if rotation slipped in between the load and the ref. Without the revalidation a writer could hold a ref to a memtable that had already been demoted to an immutable, putting it into a state where the flush worker treats the entries as written-and-readable while the writer is still mid-block-write. Third, the flush worker waits for the unified memtable’s writer refcount to drain to baseline before closing the WAL. Any writer that captured the active pointer just before rotation completes its block_manager_write_raw call into the now-immutable memtable’s WAL before the close fires, so the close cannot tear down a file descriptor that a live pwrite still references.

Backpressure in Unified Mode

Even though the memtable is shared, backpressure is still applied per column family. At commit time, the system iterates all CFs involved in the transaction and calls tidesdb_apply_backpressure() on each, which checks that CF’s L0 immutable queue depth and L1 file count. This ensures that individual column families falling behind on flush or compaction still throttle writes appropriately.

WAL Lifetime

Each unified WAL has a one-to-one relationship with the unified immutable memtable it belongs to. There is no generation refcounting. The WAL is created when the unified memtable is created. After the flush worker successfully demuxes all entries into per-CF SSTables and commits their manifests, the closed WAL segment is uploaded to the object store if replicate_wal is enabled, then deleted locally. In non-object-store mode the WAL is deleted immediately after flush.

Configuration

Unified memtable mode is enabled via unified_memtable = 1 in tidesdb_config_t. Additional configuration fields control the write buffer size (unified_memtable_write_buffer_size), skip list parameters (unified_memtable_skip_list_max_level, unified_memtable_skip_list_probability), and WAL sync mode (unified_memtable_sync_mode, unified_memtable_sync_interval_us). When unified_memtable_write_buffer_size is 0, it defaults to TDB_DEFAULT_WRITE_BUFFER_SIZE (64MB).

Write Backpressure and Flow Control

When writes arrive faster than flush workers can persist memtables to disk, immutable memtables accumulate in the flush queue. Without throttling, this causes unbounded memory growth. The system implements graduated backpressure based on the L0 immutable queue depth and L1 file count.

Each column family maintains a queue of immutable memtables awaiting flush. When the active memtable exceeds the adaptive flush threshold, it becomes immutable and enters this queue. A flush worker dequeues it asynchronously and writes it to an SSTable at level 1. The queue depth indicates how far behind the flush workers are.

The system monitors two metrics: L0 queue depth, meaning the number of immutable memtables in the flush queue (configurable threshold, default 20), and L1 file count, meaning the number of SSTables at level 1 (configurable trigger, default 4). The system then applies increasing delays to transaction commits based on pressure, once per column family per commit.

At moderate pressure (50% of stall threshold or 3x L1 trigger), writes sleep for 0.5ms. This gently slows the write rate without significantly impacting throughput. At 50% of the default threshold (10 immutable memtables), writes experience minimal latency increase. The 0.5ms delay provides flush workers CPU time while remaining barely noticeable in multi-threaded workloads.

At high pressure (80% of stall threshold or 4x L1 trigger), writes sleep for 2ms. This more aggressively reduces write throughput to give flush and compaction workers time to catch up. At 80% of the default threshold (16 immutable memtables), write latency increases noticeably but writes continue. The 4x escalation creates a non-linear control response. Since flush operations take roughly 120ms, the 2ms delay gives workers meaningful time to drain the queue.

At the stall threshold (100% or above), writes block completely until the queue drains below the threshold. The system checks queue depth every 10ms, waiting up to 10 seconds before timing out with an error. This prevents memory exhaustion when flush workers cannot keep pace. At the default threshold of 20 immutable memtables, all writes stall until flush workers reduce the queue depth. The 10ms check interval balances responsiveness with syscall overhead.

Coordination with L1

The backpressure mechanism considers both L0 queue depth and L1 file count. High L1 file count indicates compaction is falling behind, which will eventually slow flush operations (flush workers must wait for compaction to free space). By throttling writes based on L1 file count, the system prevents a cascading backlog. L1 acts as a leading indicator, and throttling occurs before L0 pressure becomes critical.

Memory Protection

Each immutable memtable holds the full contents of a flushed memtable (64MB by default). The hard cap of 16 immutable memtables per column family limits queued immutable memory to 1.024GB. Combined with the active memtable (64MB), this bounds memory usage to roughly 1.09GB per column family under maximum write pressure, preventing out-of-memory conditions. The stall threshold (default 20) is higher than the hard cap, meaning writes block at the hard cap before reaching the stall threshold under normal conditions.

To prevent truly unbounded memory growth from immutable accumulation, the flush path enforces a hard cap of 16 immutable memtables per column family. When the queue reaches this limit, the flush path blocks until the flush worker drains the queue below the cap. This complements the L0 stall threshold (which slows writes) by providing an absolute ceiling on immutable memory.

Global Memory Pressure

Per-column-family backpressure alone cannot prevent OOM when many column families accumulate memory simultaneously. The system maintains a global memory pressure level computed by the reaper thread every 100ms. The reaper sums all active memtables, immutable memtable estimates (using write_buffer_size as a conservative bound on each immutable’s data size), in-flight transaction memory (txn_memory_bytes), compaction temporary memory estimates, bloom filter bitsets, block index arrays, and cache memory across all column families. It then computes the ratio against a resolved memory limit (configurable via max_memory_usage, default 50% of system RAM, minimum 5% of system RAM). Column family creation validates that write_buffer_size does not exceed the resolved memory limit. Cache sizes are validated at open time and clamped to 30% of the limit if they would exceed it.

The pressure level is graduated: normal (below 60%), elevated (60 to 75%), high (75 to 90%), and critical (90% or above). The write path reads this level with a single atomic load per commit, adding zero overhead at normal pressure. At elevated pressure, the adaptive flush threshold tightens to 100% of write_buffer_size (no headroom) and the write path proactively triggers a non-forced flush on the current column family if it is not already flushing, plus a 0.2ms yield to slow ingestion and prevent escalation (skipped if L0/L1 backpressure already applied a delay on this commit). At high pressure, the write path force-flushes the current column family and sleeps for 2ms (skipped if L0/L1 already delayed), while the reaper force-flushes the largest non-flushing active memtable. At critical pressure, the write path performs a self-help flush on the current column family (if not already flushing) and then blocks writes entirely until the reaper brings pressure below critical, timing out after 10 seconds with TDB_ERR_MEMORY_LIMIT. The reaper responds to critical pressure with a nuclear flush, force-flushing every column family that is not already flushing, plus aggressive compaction on the column family with the most SSTables. The is_flushing and is_compacting atomic flags are checked at every level to prevent redundant operations and ensure relief efforts target actionable column families.

The L0 stall threshold scales dynamically in multi-CF deployments: the effective threshold is reduced when multiple column families share the memory budget, ensuring per-CF stall engages before global pressure reaches critical. An OS-level safety net polls get_available_memory() every roughly 5 seconds and overrides the pressure level to critical if real available memory drops below 5% of total system RAM, catching memory consumption from sources outside TidesDB’s tracking.

Worker Coordination

The throttling mechanism assumes flush workers are making progress. If the queue depth remains at or above the stall threshold for 10 seconds (1000 iterations at 10ms each), the system returns an error indicating the flush worker may be stuck. This typically indicates disk I/O failure, insufficient disk space, or a deadlock in the flush path.

Configuration Interaction

Increasing write_buffer_size reduces flush frequency but increases memory usage during stalls. Increasing l0_queue_stall_threshold allows more memory usage but provides more buffering for bursty workloads. Increasing flush worker count reduces queue depth under sustained write load. Setting max_memory_usage caps the global memory envelope across all column families. The optimal configuration depends on write patterns, available memory, and disk throughput.

The graduated backpressure approach provides smooth degradation rather than traditional binary throttling (normal operation or complete stall), contributing to TidesDB’s sustained write performance advantage.

Read Path

Search Order

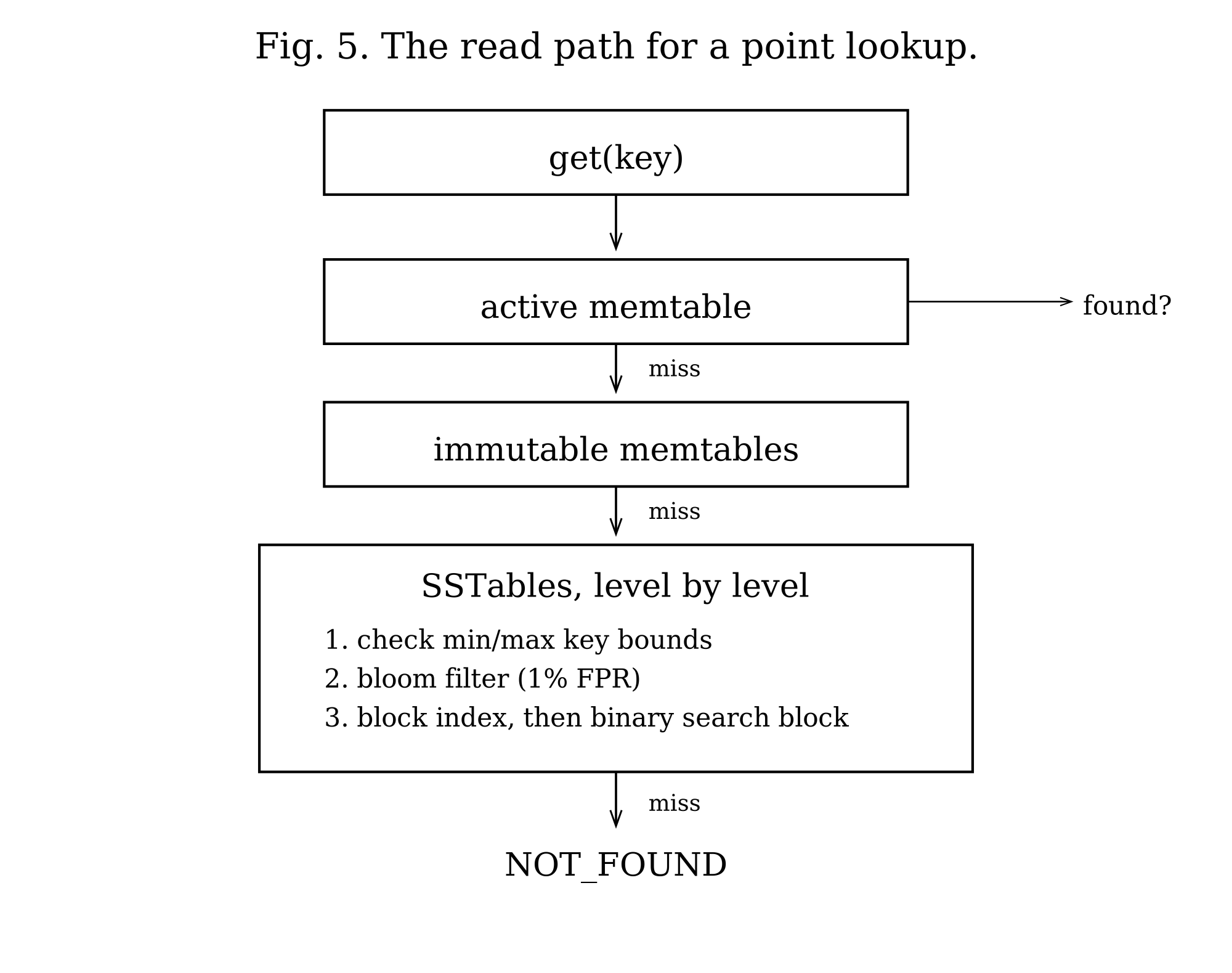

A read searches for a key in the following order (Figure 5): the active memtable first, then immutable memtables from newest to oldest, then SSTables in level 1, then level 2, and so on. The search stops at the first occurrence. Since newer data resides in earlier locations, this finds the most recent version.

SSTable Lookup

For each SSTable, the system first checks min/max key bounds using the column family’s comparator. If a bloom filter exists (enable_bloom_filter=1), it checks that next. A negative result means the key is definitely absent. If a block index exists (enable_block_indexes=1), the system finds which block might contain the key. It then initializes a cursor at the block index hint (if available) or at the first block.

For each block, the system generates a cache key from column family name, SSTable ID, and block offset if the block cache exists. On a cache hit, it copies raw bytes from cache, decompresses if needed, and deserializes. On a cache miss, it reads the block from disk, decompresses if needed, deserializes, and caches the raw bytes. It then binary searches the block for the key. If the entry is found and has a vlog offset, the system reads the value from the value log.

When a key has more than one retained version in the same block (a snapshot reader is holding an older version alive past the point a newer version was written), block search is not free to stop at the first match. tidesdb_klog_block_search_raw lower-bounds to the key, then scans the contiguous run of entries sharing it, returning the entry with the highest sequence number at or below a caller-supplied seq_ceiling. The ceiling is threaded through tidesdb_sstable_get and tidesdb_sstable_get_btree from the caller’s snapshot: a Repeatable Read or Snapshot reader passes its snapshot sequence so it sees the version it was supposed to see, while conflict detection passes UINT64_MAX so it sees the newest committed version regardless of any reader’s pinning. This is what makes “newest visible to my snapshot” a well-defined answer on a key that has both a put-at-seq-5 and a delete-at-seq-9 retained in the same block.

The bloom filter (default 1% FPR) and block index are optional optimizations configured per column family. A bloom filter false positive requires a bloom filter check (memory access), a block index lookup (likely a cache miss and therefore a disk read), a block read and deserialize (another cache miss and disk read), and a binary search of the block (memory). That amounts to two disk reads for a key that does not exist. With 1% FPR and high query rate, this adds significant I/O.

The block cache uses a clock eviction policy with reference bits. Multiple readers share cached blocks without copying. The clock hand checks each entry’s ref_bit: if ref_bit == 0, the entry is evicted; if ref_bit > 0, the bit is cleared to 0 (second chance) and the hand moves on. Readers increment ref_bit when accessing an entry, protecting it from eviction during use.

Block Index

The block index enables fast key lookups by mapping key ranges to file offsets. Instead of scanning all blocks sequentially, the system uses binary search on the index to jump directly to the block that might contain the key.

Structure

The index stores three parallel arrays: min_key_prefixes, holding the first key prefix of each indexed block (configurable length, default 16 bytes); max_key_prefixes, holding the last key prefix of each indexed block; and file_positions, holding the file offset where each block starts.

Sparse Sampling

The index_sample_ratio (configurable via TDB_DEFAULT_INDEX_SAMPLE_RATIO, default 1) controls how many blocks to index. A ratio of 1 indexes every block; a ratio of 10 indexes every 10th block. Sparse indexing reduces memory usage at the cost of potentially scanning multiple blocks on lookup.

Prefix Compression

Keys are stored as fixed-length prefixes (default 16 bytes, configurable via block_index_prefix_len). Keys shorter than the prefix length are zero-padded. This trades precision for space: keys with identical prefixes may require scanning multiple blocks to disambiguate.

Binary Search Algorithm

The block index is lossy. It stores only the first prefix_len bytes of each block’s min and max keys, so two keys whose prefixes are identical but whose suffixes differ can land in different blocks even though the index cannot tell them apart. A naive predecessor lookup that returned “the rightmost block whose min prefix is at or below the search prefix” would overshoot the block that actually holds the key when several adjacent blocks share the prefix, and on index_sample_ratio = 1 would return false-not-founds for keys whose prefix appears in multiple consecutive blocks.

The lookup is therefore split in two. compact_block_index_find_slot() performs the binary search and returns the leftmost block whose max prefix is at or above the search prefix. This is the first block that could hold either the key or a key that sorts after it, so the caller is guaranteed not to start past the target. compact_block_index_run_length() then counts how many consecutive blocks starting at that slot share a min prefix at or below the search prefix; this is the prefix-colliding run, and the caller must scan every block in it before declaring not-found because the index is not precise enough to disambiguate which block of the run actually holds the key. For unique prefixes the run length is 1 and the lookup degenerates to a single-block read. For shared prefixes the run length is short (rarely more than 2 or 3 blocks in practice) and the small scan is still vastly cheaper than the full block-by-block fallback.

When the block index covers every block (index_sample_ratio == 1, the default), scanning the colliding run is also definitive: if the key is not in any block of the run, it is not in the SSTable at all and the search short-circuits.

Early Termination

When a block index successfully identifies the target block, the point read path enables early termination. If the key is not found in the indexed block, the search stops immediately rather than scanning subsequent blocks. Since blocks are sorted, the key cannot exist in later blocks if it was not in the block the index pointed to. This optimization significantly reduces I/O for negative lookups and keys near block boundaries.

Serialization

The index serializes compactly using delta encoding for file positions (varints) and raw prefix bytes. The format is varint(count), varint(prefix_len), delta-encoded file positions, min key prefixes, and max key prefixes. This achieves roughly 50% space savings compared to storing absolute positions.

Custom Comparators

The index supports pluggable comparator functions, allowing column families with custom key orderings (uint64, lexicographic, reverse, and others) to use block indexes correctly.

Memory Usage

For an SSTable with 1000 blocks and default 16-byte prefixes, the index requires 32KB for prefixes plus 8KB for positions, totaling 40KB. With sparse sampling (ratio 10), this reduces to 4KB. The index is loaded into memory when an SSTable is opened and remains resident.

Usage in Seeks and Iteration

Block indexes are also used by iterator seek operations (tidesdb_iter_seek() and tidesdb_iter_seek_for_prev()). When seeking to a key, find_slot and run_length return the leftmost candidate block and the colliding run, the cursor jumps directly to the leftmost candidate position, and the iterator scans forward (or backward for seek_for_prev) from there.

This optimization is critical for range queries. Without block indexes, seeking to a key in the middle of a large SSTable would require scanning all blocks from the beginning. With block indexes, the seek operation is O(log N) on the index plus O(M) scanning a small run, rather than O(N*M) scanning all blocks.

The iterator must maintain a precise invariant on the cursor’s cached block size when compression is enabled. block_manager_cursor_next advances cursor->current_pos by header + current_block_size + footer, so current_block_size must hold the on-disk (compressed) size of the just-read block, not the decompressed size. On a cache miss the iterator sets current_block_size = bmblock->size (the compressed on-disk size). On a cache hit, where the cached payload is the decompressed block with an optional in-memory index header prepended, the iterator instead invalidates block_size_valid so the next cursor_next reads the size header from disk. Setting current_block_size to the decompressed size would over-advance the cursor past the next block’s start and into the middle of a block, which is exactly the failure mode the block manager’s size sanity guard exists to catch.

Block Reuse Fast Path

When an SSTable source already has a deserialized block loaded and the seek target falls within that block’s key range (between the first and last key), the seek skips the expensive release, cache lookup, and deserialization cycle entirely and performs an in-place binary search on the existing block. This eliminates the dominant cost of repeated seeks to nearby keys. The fast path fires for both forward and backward seeks and handles edge cases where the target is before the current block (returns first entry) or after it (sequential advance to the next block via cursor_next, bypassing the block index binary search). For workloads with high seek locality, this reduces deserialization CPU from roughly 88% to roughly 8%. The sequential advance path is critical for monotonically advancing seeks (the common iteration pattern) where the next key is always in the next block.

Block Boundary Prefetch

When the iterator advances to the last entry in a klog block, it issues a posix_fadvise willneed hint on the next block’s file position so the OS begins reading it into the page cache before the iterator actually needs it. This hides I/O latency for sequential iteration across block boundaries.

Block Cache Integration for Sequential Advance

When the iterator needs the next block during sequential advancement, it checks the block clock cache before falling back to a pread syscall. On a cache hit, the block data is pinned zero-copy from the cache, the indexed block header is stripped if present, and deserialization proceeds directly from cached memory without any I/O. On a cache miss, the block is read from disk and populated into the cache for subsequent iterations. This closes the gap where iterator seek operations were cache-aware but sequential advancement was not. For hot-set workloads where the same blocks are accessed repeatedly across range scans, point lookups by one thread populate blocks that other threads’ iterators can then read from cache, enabling cross-path cache sharing.

Incremental Indexed Advance

When a cached block has a pre-built key offset index (the indexed block format produced by tidesdb_build_indexed_block_data), the iterator advance path parses only the single next entry directly from the raw bytes using the index table offsets instead of deserializing the entire block. Each next() call reads the 20-byte index entry to get the byte offset, key offset, key size, and absolute sequence number, then jumps to that position in the raw data to parse flags, value size, TTL, and vlog offset from a few varints. Key and value pointers reference the raw buffer directly via cache pin with zero copy. The seek path wires the index pointers from the cached block into the lazy state so the incremental advance fires on every cached block after a seek. Non-indexed blocks (cache miss, no pre-built index) fall back to full O(N) deserialization. This replaces the previous behavior where every next() call after a seek forced full deserialization of all entries in the block even though only one entry was needed.

Cached Memtable Sources

Iterator seek operations cache memtable sources (active memtable, immutable memtables, and transaction write buffer) on the iterator at creation time rather than recreating them on every seek call. This eliminates per-seek overhead of allocating source structs, initializing skip list cursors, traversing to the first entry, and creating initial key-value pairs. The active memtable is pinned with try_ref during iterator creation to prevent a concurrent rotation plus flush from freeing the memtable between the atomic load and the merge source creation. The pin is released after the merge source takes its own internal reference. Immutable memtables are snapshotted via the lock-free RCU snapshot mechanism with per-item try_ref for the same reason. The cached sources are repositioned to the target key on each seek using the existing cursor seek operations. A pre-allocated temporary source array on the iterator avoids malloc/free of the source list on every seek as well. Combined with the SSTable source cache (which persists across seeks via cached_sources), this means the hot seek path performs zero memory allocations.

Zero-Copy Memtable Merge Sources

SSTable merge sources expose their current key-value pair as a borrowed pointer into pinned block data via source->inline_kv with the TDB_KV_FLAG_BORROWED flag, which avoids allocating a fresh key-value pair on every cursor step. Memtable and unified-memtable merge sources use the same pattern. The skip list cursor returns key and value pointers into stable node memory, and the iterator pins the memtable (active via try_ref, immutable via refcount) for its entire lifetime, so the borrowed pointers remain valid until the next advance.

The merge heap materialises a stable owned copy into the iterator’s double-buffered pop_buf arena only when the caller actually retains the popped entry. Discards inside the tombstone-skip loop do not trigger that materialisation.

The tombstone-skip loop itself is consolidated across forward and backward iteration into a single helper. When the heap’s top entry is a visible tombstone, the helper copies the tombstone’s key into a stable stack buffer (with a heap fallback for keys larger than TDB_PREFIXED_KEY_STACK_MAX) and then advances every other source whose current entry matches that key. The forward path uses tidesdb_merge_heap_pop_discard, which moves the top source’s cursor forward without materialising into pop_buf, so each skipped tombstone costs one cursor step and zero key or value copies. Copying the tombstone key onto the stack before the skip loop prevents subsequent pops inside the loop from reusing the same pop_buf slot and overwriting the tombstone-key pointer that the comparator still depends on.

Compaction

Strategy

The compaction strategy consists of three distinct policies based on the principles of the “Spooky” compaction algorithm described in academic literature, working in concert with Dynamic Capacity Adaptation (DCA) to maintain an efficient LSM-tree structure.

Overview

The primary goal of compaction in TidesDB is to reduce read amplification by merging multiple SSTable files, cleaning up obsolete data, and maintaining an efficient LSM-tree structure. TidesDB does not use traditional selectable policies (like Leveled or Tiered); instead, it employs three complementary merge strategies that are automatically selected based on the current state of the database. The core logic resides in the tidesdb_trigger_compaction function, which acts as the central controller for the entire process.

Triggering Process

Compaction is triggered when specific thresholds are exceeded, indicating that the LSM-tree structure requires rebalancing. It initiates under three conditions. First, when Level 1 accumulates a threshold number of SSTables (configurable, default 4 files), the system recognizes that flushed memtables are piling up and need to be merged down into the LSM-tree hierarchy. Second, when any level’s total size exceeds its configured capacity, the system must merge data into the next level to maintain the level size invariant. Each level holds approximately N times more data than the previous level (configurable ratio, default 10x). Third, when any single SSTable’s tombstone density crosses a configurable threshold, compaction is escalated for that column family even if neither file-count nor capacity thresholds are tripped. The Tombstone Density Trigger subsection covers the mechanics.

Tombstone Density Trigger

Workloads dominated by deletes accumulate tombstones inside SSTables until a compaction at the largest level reclaims them. Reads over a deleted region pay for every tombstone the merge iterator has to skip, so a column family with infrequent natural compactions can accumulate enough tombstones to seriously degrade range scan latency. The tombstone density trigger lets the engine recognize and act on this state without waiting for capacity-based or file-count-based triggers.

Each SSTable tracks its tombstone count alongside its entry count. The counter is incremented at every write site that emits a tombstone, including block-format and B+tree memtable flushes, full preemptive merges, dividing merges, partitioned merges, and unified flush per-CF writes. The counter is persisted to the SSTable metadata footer via a flag bit (SSTABLE_FLAG_TOMBSTONE_COUNT) appended after the existing fixed fields, so SSTables written by older binaries remain readable. When the flag is absent the counter loads as a sentinel (UINT64_MAX) meaning unknown and is ignored by the trigger logic. New SSTables always carry the counter.

The trigger is configured by two per-column-family knobs. tombstone_density_trigger is a ratio between 0.0 and 1.0, and setting it above zero arms the check. The default of 0.0 disables it and preserves existing behavior. tombstone_density_min_entries (default 1024) is a floor on the entry count, so single-entry SSTables that happen to be tombstones do not noisily trigger compaction. After every flush, tidesdb_cf_dense_tombstone_witness iterates every level of the column family and asks whether any single SSTable’s tombstone count divided by entry count exceeds the trigger ratio while having at least the minimum entry count. A single witness is enough to escalate compaction, and the system does not require all SSTables to be tombstone-heavy.

The witness runs regardless of the structural triggers. The L1 file count and per-level capacity checks still fire on their own, but the density check is independent: a column family whose write pattern never trips a structural trigger can still cross the density threshold and force a compaction. On a witness hit the system emits a targeted merge of the witness SSTable’s key range straight to the largest active level, where regular tombstones are finally allowed to drop. This is the same tidesdb_targeted_merge machinery the public tidesdb_compact_range API rides on, just driven by the engine rather than the caller. The same path is wired into both the per-CF flush completion and the unified flush completion, so unified mode column families get tombstone relief without any extra plumbing. The witness loop itself is a small scan over a refcounted snapshot of each level, so it is cheap relative to a flush.

The statistics surface exposes the trigger state for observability. tidesdb_stats_t adds total_tombstones for the sum across all SSTables, tombstone_ratio for the database-wide ratio, level_tombstone_counts for the per-level breakdown, max_sst_density for the worst single SSTable seen, and max_sst_density_level for the level it lives on. These let operators see how close the column family is to the trigger and tune the ratio to their workload.

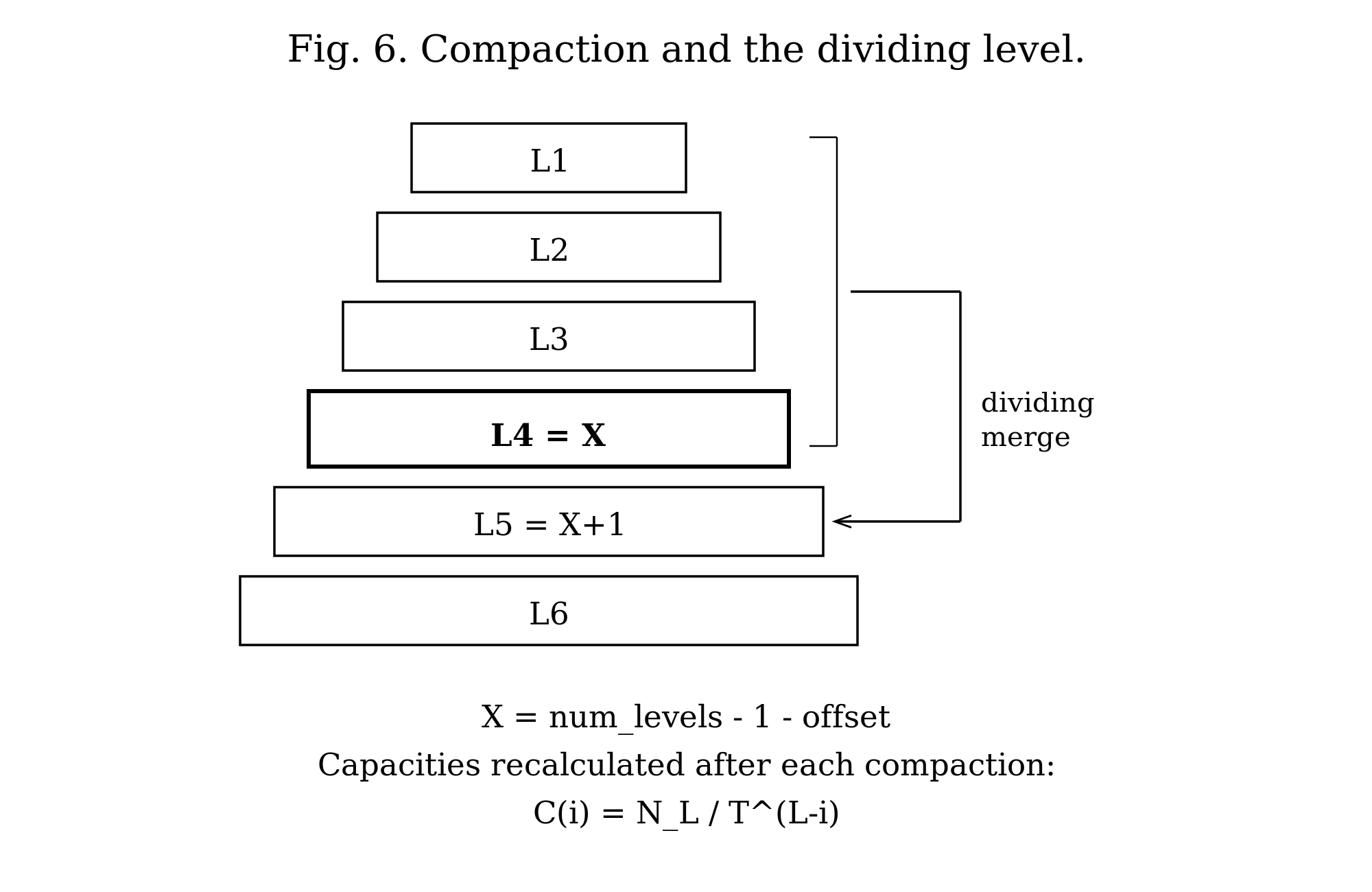

Calculating the Dividing Level

The algorithm calculates a dividing level (X) that serves as the primary compaction target (Figure 6). This is not a theoretical reference point but rather a concrete level in the LSM-tree computed using:

X = num_levels - 1 - dividing_level_offsetWhere dividing_level_offset is a configurable parameter (default 2) that controls compaction aggressiveness. A lower offset means more aggressive compaction (merging more frequently into higher levels), while a higher offset defers compaction work.

For example, with 7 active levels and the default offset of 2:

X = 7 - 1 - 2 = 4This means Level 4 serves as the primary merge destination.

Selecting the Merge Strategy

Based on the compaction trigger and the relationship between the affected level and the dividing level X, the algorithm selects one of three merge strategies.

If the target level equals X, the system performs a dividing merge, merging all levels from 1 through X into level X+1. This is the default case when no level before X is overflowing.

If a level before X cannot accommodate the cumulative data, the system performs a full preemptive merge from level 1 to that target level. This handles cases where intermediate levels are filling up faster than expected.

After the initial merge, if level X is still full, the system performs a partitioned merge from level X to a computed target level z. This is a secondary cleanup phase that runs after the primary merge completes.

The Three Merge Modes

The compaction algorithm employs three distinct merge methods, each optimized for different scenarios within the LSM-tree lifecycle.

Full Preemptive Merge

This is the most straightforward merge operation. It combines all SSTables from two adjacent levels into the target level.

It is used when a level before the dividing level X cannot accommodate the cumulative data from levels 1 through that level. It also serves as a fallback mechanism by the other merge functions when they cannot determine partitioning boundaries (for example, when there are no existing SSTables at the target level to use as partition guides).

The operation takes a start_level and target_level as input, opens all SSTables from both levels, and creates a min-heap containing merge sources from all SSTables. It iteratively pops the minimum key from the heap, writing surviving entries (non-tombstones, non-expired TTLs, keeping only the newest version by sequence number) to new SSTables at the target level. It fsyncs the new SSTables, commits them to the manifest, and marks old SSTables for deletion.

This mode is simple and effective for small-scale merges but generates potentially large output files since it does not partition the key space.

Dividing Merge

This is the standard, large-scale compaction method for maintaining the overall health of the LSM-tree. It is designed to periodically consolidate the upper levels of the tree into a deeper level.

It is used when the target level equals the dividing level X, which is the default case when no intermediate level is overflowing. This is the expected scenario during normal write-heavy workloads when Level 1 accumulates the threshold number of SSTables (default 4).

The merge combines all levels from Level 1 through the dividing level X into level X+1. If X is the largest level in the database, the system first invokes Dynamic Capacity Adaptation (DCA) to add a new level before performing the merge, ensuring there is always a destination level available. The merge is intelligent about partitioning: it examines level X+1 (the destination level) and extracts the minimum and maximum keys from each SSTable at that level. These key ranges serve as partition boundaries. The key space is divided into ranges based on these boundaries, and the merge is performed in chunks. Each chunk produces SSTables that cover only a single key range. This partitioning prevents the creation of monolithic SSTables and distributes data more evenly across the target level. If no partitioning boundaries can be determined (for example, if the target level is empty), the function falls back to calling tidesdb_full_preemptive_merge.

This mode handles large-scale data movement efficiently, produces smaller and more manageable output files due to partitioning, and is critical for maintaining read performance by preventing excessive file proliferation at upper levels.

Partitioned Merge

This is a specialized merge designed for secondary cleanup after the initial merge phase. It addresses scenarios where the dividing level X remains full after the primary merge operation.

It is used after the initial merge (dividing or full preemptive), when the dividing level X is still full and its size exceeds its capacity. The merge operates on a specific range of levels (from level X to a computed target level z) rather than merging all the way from Level 1. Like the dividing merge, it uses the largest level’s SSTable key ranges as partition boundaries. It divides the key space and merges each partition independently, producing smaller output SSTables that each cover a single key range.

This approach is more focused and less resource-intensive than a full dividing merge, allowing the system to relieve pressure in a specific area of the LSM-tree without triggering a full tree-wide compaction. It is fast and targeted, and helps prevent compaction from falling behind during bursty write patterns.

When the partitioned merge targets the largest level, output SSTables are capped at file_max = C_X (the capacity of the dividing level), per Algorithm 2 of the Spooky paper. If a partition’s output exceeds this threshold, it is split into multiple SSTables at klog block boundaries. This bounds transient space amplification to 1/T regardless of partition key distribution skew, ensuring all files at the largest level have similar sizes between N_L/(T·2) and N_L/T bytes.

Dynamic Capacity Adaptation (DCA)

DCA is a separate mechanism from compaction. Whilst the three merge modes determine how to merge data, DCA determines when to add or remove levels from the LSM-tree structure and continuously recalibrates level capacities to match the actual data distribution.

DCA is not a constantly running process. Instead, it is triggered automatically after operations that significantly change the structure or data distribution of the LSM-tree. It runs after a compaction cycle completes, when the tidesdb_trigger_compaction function calls tidesdb_apply_dca at the end of its run. It also runs after a level is removed, when the tidesdb_remove_level function calls tidesdb_apply_dca to rebalance the capacities of the remaining levels.

Capacity Recalculation Formula

The core of DCA is the tidesdb_apply_dca() function, which recalculates the capacity of all levels based on the actual size of the largest (bottom-most) level. The formula used is:

C_i = N_L / T^(L-i)Where C_i is the new calculated capacity for level i, N_L is the actual size in bytes of data in the largest level L (the ground truth of how much data exists), T is the configured level size ratio between levels (default 10, meaning each level is 10x larger than the one above), L is the total number of active levels in the column family, and i is the index of the current level being calculated.

The execution proceeds as follows. The system gets the current number of active levels, identifies the largest level and measures its current total size, iterates through all levels from Level 0 to Level L-2, applies the formula to calculate the new capacity for each level (with a minimum floor of write_buffer_size), and updates the capacity property accordingly.

This adaptive approach ensures that level capacities remain proportional to the real-world size of data at the bottom of the tree. As the database grows or shrinks, DCA automatically adjusts capacities to maintain optimal compaction timing, preventing both over-provisioned capacities (which cause high read amplification) and under-provisioned capacities (which cause excessive compaction and high write amplification).

Level Addition

DCA adds a new level when the dividing merge attempts to merge into the largest level (X is the maximum level number), or when a level exceeds its capacity and needs a destination level that does not yet exist.

The system creates a new empty level with capacity calculated using the formula write_buffer_size * T^(level_num-1), where level_num is the new level’s number. It atomically increments num_active_levels to reflect the new structure. Normal compaction then moves data into this new level. The data is not moved during level addition itself, to avoid complex data migration logic and potential key loss. After the compaction cycle completes, tidesdb_apply_dca() is invoked to recalculate capacities for all levels based on actual data distribution.

Level Removal

DCA removes a level when several conditions are met: the largest level has become completely empty after compaction, the number of active levels exceeds the configured minimum (default 5 levels), the level was not just added in the current compaction cycle (newly added levels are intentionally empty), and no pending flushes are queued and no SSTables exist at level 1.

The system verifies that num_active_levels > min_levels to prevent thrashing (repeatedly adding and removing levels). It updates the new largest level’s capacity using the formula new_capacity = old_capacity / level_size_ratio, frees the empty level structure, atomically decrements num_active_levels, and invokes tidesdb_apply_dca() to rebalance all level capacities based on the new level count and the actual size of the new largest level.

Initialization

Column families start with a minimum number of pre-allocated levels (configurable via min_levels, default 5). During recovery, if the manifest indicates SSTables exist at level N where N exceeds min_levels, the system initializes with N levels to accommodate the existing data. If SSTables exist only at levels below min_levels (for example, only Levels 1 through 3), the system still initializes with min_levels (5), leaving upper levels (4 and 5) empty. This floor prevents small databases from thrashing between 2 and 3 levels and guarantees predictable read performance by maintaining a minimum tree depth. After initialization, tidesdb_apply_dca() is invoked to set appropriate capacities for all levels based on the actual data found during recovery.

The Merge Process

All three merge policies share a common merge execution path with slight variations.

The system first opens all source SSTables, including the klog and vlog files for all SSTables involved in the merge from both the source and target levels. For each SSTable, it creates a merge source structure containing the source type, the current key-value pair being considered, and a cursor for iterating through the source.

Next, the system constructs a min-heap (tidesdb_merge_heap_t) with elements of type tidesdb_merge_source_t*. The heap orders sources by their current key using the column family’s configured comparator.

The iterative merge proceeds by repeatedly popping the minimum element from the heap (the source with the smallest current key), advancing that source’s cursor to the next entry, and sifting the source back down into the heap based on its new current key.

Filtering and deduplication happen during this process. Tombstone entries are discarded. Entries whose time-to-live has expired are discarded. When multiple sources contain the same key, only the version with the highest sequence number is kept. Older versions are discarded.

Surviving entries are written to new SSTables at the target level. Data is written in 64KB blocks. Values meeting or exceeding the configured threshold (512 bytes by default) are written to the value log, while the key log stores only the file offset. Smaller values are stored inline in the key log. Blocks are optionally compressed using the column family’s configured compression algorithm.

After all data is written, the system appends auxiliary structures to each key log: a block index for fast lookups, a bloom filter for negative lookups (if enabled), and a metadata block with SSTable statistics. The system fsyncs both the klog and vlog files to ensure durability.

The new SSTables are committed to the manifest file, which tracks which SSTables belong to which levels. This operation is atomic: the manifest is written to a temporary file, fsynced, and atomically renamed over the original. Finally, the old SSTables from the source and target levels are marked for deletion. The actual file deletion may be deferred by the reaper worker to avoid blocking the compaction worker.

Handling Corruption During Merge

If a source encounters corruption while its cursor is advancing, the tidesdb_merge_heap_pop() function detects the corruption via checksum failures in the block manager. It returns the corrupted SSTable to the caller for deletion. The corrupted source is removed from the heap, and the merge continues with the remaining sources. This ensures that compaction can complete even if one SSTable is damaged, allowing the system to recover by discarding the corrupted data.

Value Recompression

Large values (those meeting or exceeding the value log threshold) flow through compaction rather than being copied byte-for-byte. The system reads the value from the source value log, recompresses it according to the current column family configuration (which may differ from the original compression setting), and writes the recompressed value to the destination value log. This allows compression settings to evolve over time without requiring a full database rebuild.

Single-Delete and Pair Cancellation

A regular tombstone written by tidesdb_txn_delete has to be carried forward through every compaction until it reaches the largest active level, because any level below the compaction output could still contain an older put for the same key that the tombstone is masking. Dropping the tombstone earlier would re-expose that stale put. Workloads that insert each key once and then delete it once therefore pay a latency tax on reads: every range scan over the deleted region walks across the accumulated tombstones until a compaction at the bottom level finally reaps them.

tidesdb_txn_single_delete lets the caller opt out of that conservatism for keys that satisfy a simple contract: between any two single-deletes on the same key, and between the start of the key’s history and its first single-delete, the key has been put at most once. With that promise the engine is free to drop a put and its matching single-delete together the first compaction that sees both in the same merge input, regardless of level. Reads treat a single-delete exactly like any other tombstone; the difference lives entirely in the compaction merge.

The single-delete subtype is a second flag bit (TDB_KV_FLAG_SINGLE_DELETE) carried alongside TDB_KV_FLAG_TOMBSTONE in the kv-pair flag byte. The byte is already persisted by both the klog-block and B+tree SSTable formats, so the extra bit does not change the on-disk layout; older binaries that do not examine the single-delete bit still see a tombstone and treat the entry correctly. The bit is preserved through the write path: the WAL encodes it next to the existing tombstone bit, the skip list carries an equivalent SKIP_LIST_FLAG_SINGLE_DELETE bit on each version, memtable flush stamps it onto the flushed SSTable entry, and merge sources surface it on the kv-pair they expose to compaction.

Pair cancellation fires during the merge emit phase. The merge heap delivers same-key versions in descending sequence order, so the first entry popped for a key is the newest surviving version. The emit loop buffers that first-for-key entry as pending and only resolves its fate when the next distinct key arrives. While pending is held, any same-key entries popped behind it are dropped silently (the existing dedup rule). When the pending entry is a single-delete and the next older same-key version is a live put rather than another tombstone, the pair is flagged for cancellation. On resolve, a pending single-delete that paired with a put is dropped outright; a pending regular tombstone that did not pair follows the existing rules (dropped only when merging into the largest level) and unexpired live entries are emitted normally. The same lookahead runs in every emit site: the B+tree writer used by full-preemptive merges into the largest level, the klog-block inline loop of the full-preemptive merge, the klog-block inline loop of the dividing merge, and the klog-block inline loop of the partitioned merge.

The partitioned merge’s inline loop has a mid-loop SSTable-split on file_max that is awkward to restructure around a one-step buffer, so it uses a narrower peek-based variant: when a popped single-delete’s key matches the next top-of-heap source’s current key and that source has a live put, the single-delete is dropped immediately and the existing same-key dedup sweeps the put on the next iteration. The net effect is the same for the dominant case where the put and the single-delete arrive adjacent in the merge input.

Calling tidesdb_txn_single_delete on a key that has been put more than once since the last single-delete is a contract violation; the engine cannot detect it, and the result is that only the most recent put is masked while older puts remain visible. Callers that cannot guarantee the contract must use tidesdb_txn_delete instead.

Targeted Range Compaction

The tombstone density trigger handles cleanup automatically, but operators sometimes need to reclaim space immediately for a known key range. Bulk reclaim after a large range delete, tenant eviction, and sliding-window expiration that does not fit TTL semantics all share the same shape. The caller knows the affected range and waiting for the next natural compaction is unacceptable.

tidesdb_compact_range(cf, start_key, start_size, end_key, end_size) provides a synchronous, targeted compaction over a key range. The function snapshots each level, selects only those SSTables whose minimum and maximum keys overlap the requested range using the column family’s comparator, and runs a dedicated merge over the overlapping inputs. SSTables outside the range are not touched. The merge follows the same emit-loop logic as the automatic merges, including tombstone reclamation rules, single-delete pair cancellation, sequence-based deduplication, and value recompression, so the output is consistent with what a background compaction would produce.

The implementation lives entirely in the compaction layer as the static helper tidesdb_targeted_merge, sharing the merge heap, block writer, and manifest commit machinery with the existing merge modes. The call is synchronous and blocks until the merge commits or fails, returning the standard error codes. There is no separate worker dispatch and no queue, the caller’s thread does the work.

The same tidesdb_targeted_merge helper is reused by the tombstone density witness path described under Tombstone Density Trigger. When the post-flush witness finds a sufficiently dense SSTable it builds a range from that SSTable’s min and max keys and runs the merge straight to the largest active level. The public tidesdb_compact_range API and the automatic witness path therefore share one implementation, which keeps tombstone reclamation rules, single-delete pair cancellation, sequence-based deduplication, and value recompression identical regardless of which trigger fired.